That URL is not an article or blogpost. Redirecting to the homepage.

Fastlead leadership development

The more effective way to develop frontline leaders, middle managers and sales leaders

The Fastlead coaching process challenges every participant to create a personal growth plan

Making theory practical: the benefits of episodic learning

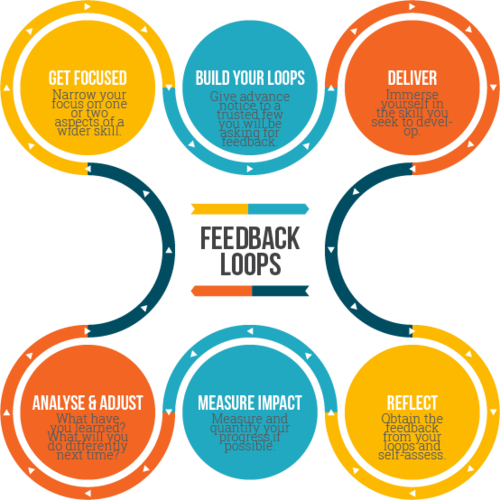

Participants mix coaching, learning from frontline leader peers, with concise, challenging pre- and post- work exercises that embed their learning.

Between coaching sessions, they try out the new techniques they’ve learned in their day-to-day work.

Learn, embed, apply: the personal growth plan

Every Fastlead participant creates a Personal Growth Plan in collaboration with their manager and coach. Through the program, it’s continually updated with real-life actions the participant will take to take to embed their learning.

Concise learning for busy leaders

Pre- and post-work is always concise, achiveable exercises that embed learning. For instance, pre-work may ask the participant to spend a maximum of 20 minutes reflecting on how they personally delegate, or manage upward - then to add ideas for improvement to their action plan.

We ask managers to get and stay involved

Fastlead features regular three-way check ins and debriefs for the manager, coach, and participant.

There’s two benefits: participants don’t forget what their manager asked them to achieve - and managers are reminded to offer the participant lots of opportunity to embed new skills.

Quickly, efficiently organised - with no extra work for you.

Don’t worry, our program management team and automated systems do all the logistics work of keeping sessions and pre/post work in sync.

Our client portal keeps you updated about the learning progress of your small groups, and their participants and action plans.

.png)

"Thank you for investing in us".

Fastlead is often the first intensive coaching a frontline leader has had. So they’re flattered, inspired and challenged.

Their confidence and decision making benefit enormously from honest discussion with peers of their fears and expectations.

17 Fastlead modules help build new behaviours and better leadership

Decision Making

Inclusive Leadership

Wellbeing at Work

My Leadership Brand

Coaching and Developing Others

Delegation

Effective Communication

Emotional Intelligence

Engaging and Motivating Others

Having Courageous Conversations

Influencing Without Authority

Leading Through Change

Managing Up

Self Awareness

Setting Performance Expectations

Setting Priorities

Team Purpose

Read more about our successful HR team clients

Case study

UnitingCare QLD: ensuring quality, consistent leadership across 26,000 staff and volunteers

Case study

Fastlead at Dulux Group - Flexible, local, on topic and personal.

Case study

Inchcape: driving highly effective layered learning for frontline leaders

.png)

.png)

.png)

.png)

.png)

.png)